Product

25 March 2024

New in March 2024

Improvements on cloud storage & volume mount, fine-tuning Mixtral 8x7B, and more

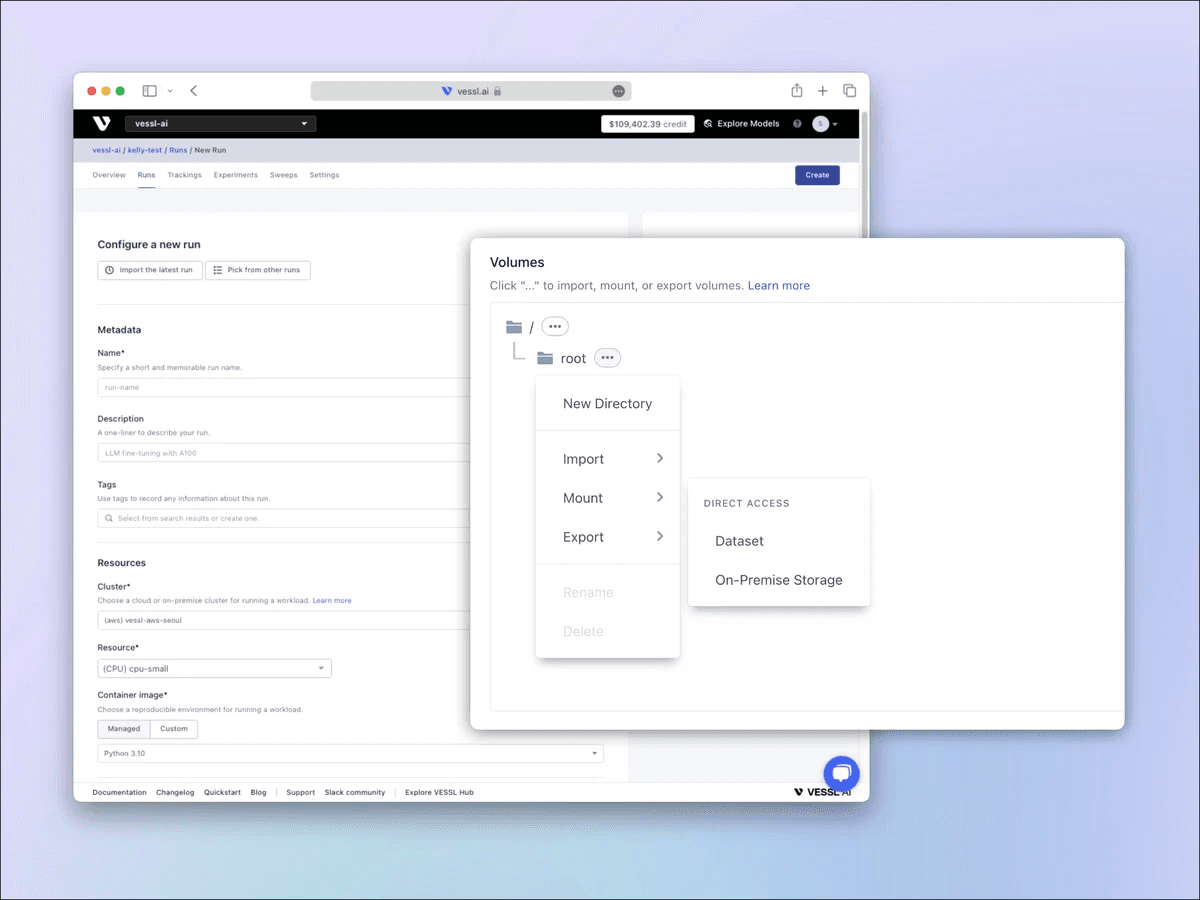

Mounting storage volumes and ensuring data persistence across sessions or instance restarts can quickly become overwhelming when you are dealing with large models and multiple instances. specifying access permissions, selecting the correct mount points, and defining destination format and location can be complex, especially when dealing with distributed systems or orchestrating containers, where each instance or container may require access to shared or isolated data volumes.

This month, we are excited to bring new features and improvements that help you mount object storage like AWS S3 and Google Cloud Storage directly to your workloads in just a few clicks. We also updated new models on VESSL Hub including an in-depth tutorial on how you can fine-tune Mixstral 8x7B with GPT-generated custom datasets on a single GPU.

Mount object storage directly to your workload

You can now import your data from cloud storage providers like AWS S3 and GCP GCS for your run. This copies all the files in the defined path from the cloud storage. Once you are done with training or fine-tuning, you can also export your results such as logs and checkpoints to the same bucket. You can also bring your own cloud storage by adding the credentials of your cloud storage on our improved Secrets page. Refer to our docs↗ for a step-by-step guide.

FUSE support for Google Cloud Storage

As part of quality-of-life improvements on volumes & datasets, we are bringing FUSE↗ support for GCS. FUSE helps you work with object storage through familiar filesystem operations without having to use GCS SDKs. This means less time going through the GCS documentation, and more time on your model. Here's an example.

from google.cloud import storage

# Initialize Google Cloud Storage client

storage_client = storage.Client.from_service_account_json('your-service-account-file.json')

# Reference an existing bucket

bucket = storage_client.get_bucket('my-bucket')

# Upload a file

blob = bucket.blob('remote_file.txt')

blob.upload_from_filename('local_file.txt')# Mount a Google Cloud Storage bucket to a directory

gcsfuse my-bucket /path/to/mountpoint

# Copy a file to the mounted Google Cloud Storage bucket

cp local_file.txt /path/to/mountpoint/remote_file.txt

# List files in the mounted bucket

ls /path/to/mountpoint

# Read a file from the mounted bucket

cat /path/to/mountpoint/remote_file.txtFine-tune Mixstral 8x7B with custom datasets

In this month's update on our model, we discus how you can use GPT-4 to generate domain-specific Q&A datasets and fine-tune Mixtral 8x7B for improved performance. We also show the significance of fine-tuning in enhancing a model's contextual understanding and the performance trade-off between the two go-to methods like LoRA and QLoRA. Read more↗

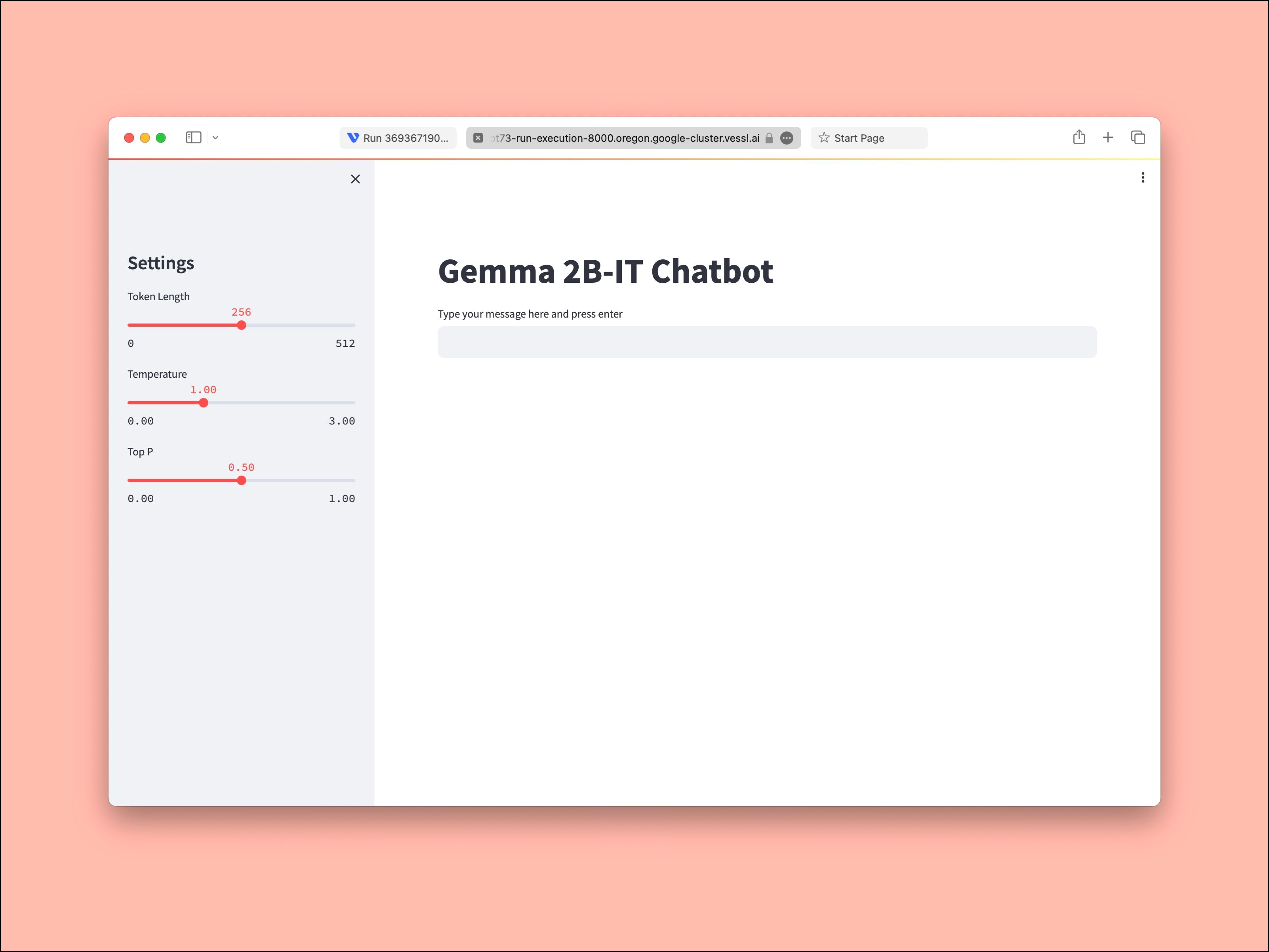

While you are there, check out our Streamlit app for Gemma-2B as well.

That's it for this month. We'll be back with the newest updates and the latest models next month. See you soon.

Yong Hee

Growth Manager

Try VESSL today

Build, train, and deploy models faster at scale with fully managed infrastructure, tools, and workflows.